Neural networks lie at the heart of Machine learning revolution.

They work exceptionally well for tasks otherwise considered impossible to code classically. Consider the example of the task to differentiate between images of cats and dogs

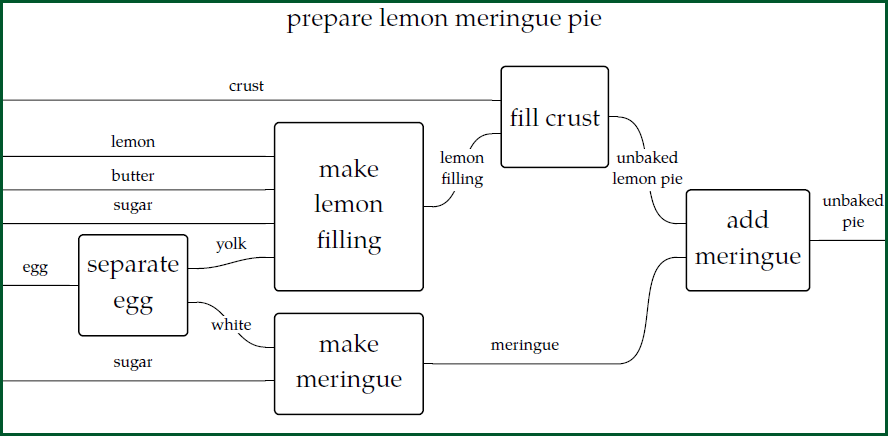

Classical computer programs are like a recipe. Just like a set of clear and precise set of instructions to be followed by a machine.

But how do you instruct a machine to differentiate between cats and dogs?

A task which we humans are so well versed at. But do we know how we do it? 🙄

Imagine explaining it to an alien who has never seen it!

Easy? You are still imagining it talking to your friend. Remember it’s an alien. You can’t use the words eyes, ear, nose, fur!

But what animal is the this one?

Answer

Cat, duh!

Even if the image in terms of pixels is different from the first one in so many ways, our mind has has no problem identifying it. We have seen so many examples of cats that now our brain has its own approximation model of what a cat is.

Machine learning takes similar approach. Neural networks were designed to mimic how neurons supposedly behave in our brains. How accurately they represent actual brain is questionable. But they are surely highly effective in doing specific tasks similar to or sometimes better than human brains.

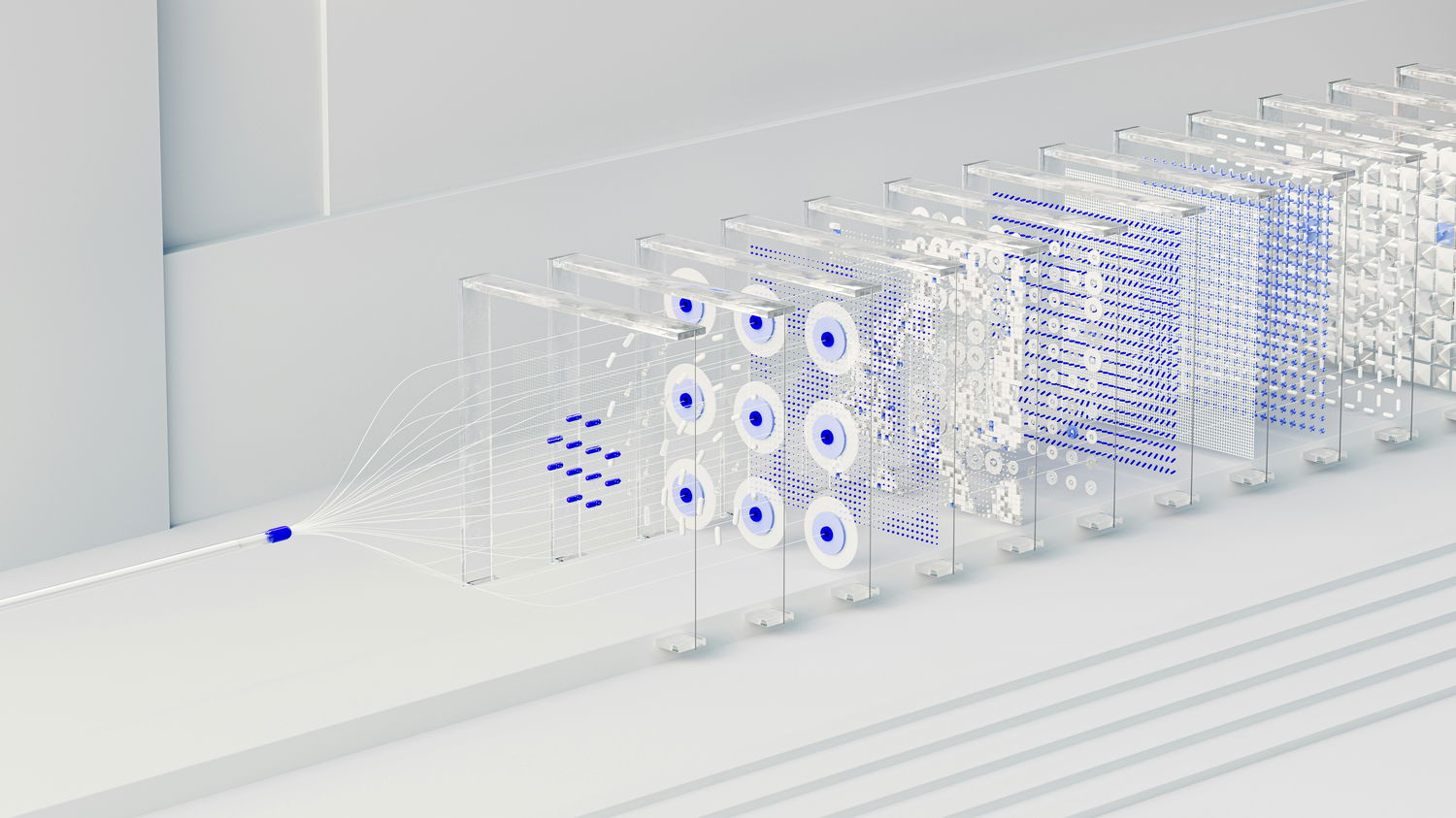

A neural network acts like a box with input and output along-with layers of nodes inside that does the computations. Let’s come back to our task of differentiating between cats and dogs.

Since the box only deal with numbers, let assign numbers, say 1 for dogs and 2 for cats for output. Each input image is already a grid containing numbers in the form of pixel values. So this box inputs an image and spits out either number 1 or number 2.

Now this is how we will train the box:

We will take take 5000 images of cat and dogs and feed that as an input in the box. This step is called a forward pass.

Each time we feed an image of a dog and the box output number 1 we tell the box it is right and if it output 2 we tell it its wrong. And vice-versa for the image of the cat. This is called assigning a loss function for the network.

In each cycle whenever the box is told it is wrong, it recalibrates its nodes through a process called back-propogation.

Until finally it has seen enough examples and has calibrated its nodes such that any time you input an image of a dog it splits 1 and and 2 if its a cat with say 95% accuracy.

Voila! Our box is trained for the task.

Note:

- While training we make sure that we show our box as many different types of images of cats and dogs for a better example.

- This training process can be a challenging step and can be hard for the network to learn. That's where we might have to tweak the connections of nodes inside the box.

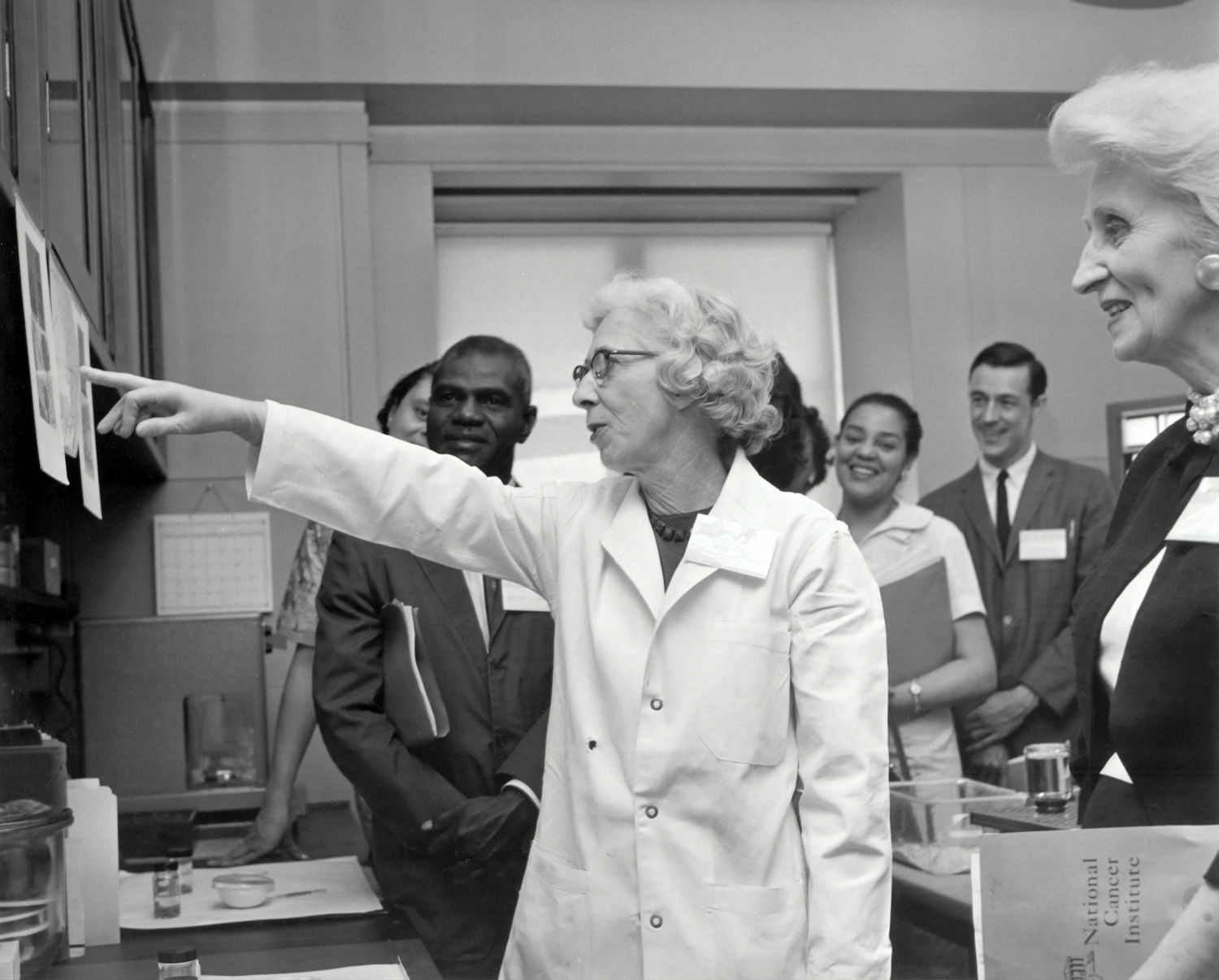

The applicability of these networks goes beyond the fun tasks as above. Such networks have been trained for various tasks including cancer prediction, self-driving cars, stock market predictions, and so on.